Architect in the Machine

Today, architecture as a discipline finds itself in a moment of crisis and opportunity. The phrase “intelligent design” — not to be confused with the creationist-inspired, culture-warrior sense in which the term was used not so long ago! — has come to find direct relevance in the field of architecture — not in a theological way; not in a science curriculum way; but, rather, in a technological way. The term “artificial intelligence” (or AI), as we understand it today, was coined in 1956. It reemerged with real potential in the public imagination when IBM’s Deep Blue defeated chess grandmaster Garry Kasparov in 1997. Research has progressed rapidly in the last 20 or more years, particularly in the last decade, as big data (access to massive datasets and the ability to easily store them in the cloud), GPU performance, and processing power have all improved significantly. Architecture, also, underwent massive changes during this time period due to technology — from the adoption of free-form digital modeling software like CATIA, popularized by Frank Gehry and Greg Lynn, to the ubiquity of building information modeling (BIM).

Today, artificial intelligence is a broad umbrella term for various types of computer algorithms and models that mimic human intelligence. The most common subtype of AI with relevance to our field is machine learning, a term that describes various types of networks and models that attempt to teach themselves or “learn” from datasets with which they’re provided in an attempt to automate or optimize some output. It’s also important to distinguish between parametric, or generative, design scripting — simulations that have been used in the field for over a decade — and intelligent computational processes. Mike Haley, senior director of AI and robotics at Autodesk, gives an example to help illustrate the difference: A generative design program could take a series of parameters and inputs and give the designer 1000+ iterations from which to choose; AI can then take all those options, sort and group them by different traits or characteristics (or even by which is “best,” if one can define it) to make reviewing and choosing a desired option much easier for the designer. While this is much faster and more convenient for the designer, it is also apparent that AI could potentially replace the designer and her labor completely in this scenario: The AI could simply be trained to evaluate and weigh certain characteristics and decide the best option(s) all on its own.

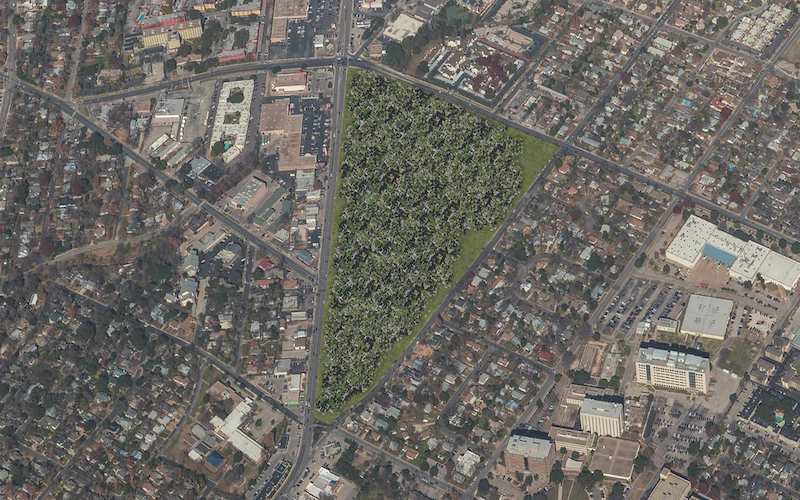

While the allied disciplines of real estate and construction have seen earlier adoptions of AI into their working processes — Katerra is using AI in its cross-laminated timber production factories to evaluate and optimize raw material; a Chinese startup that branched out of OMA, appropriately named XKOOL, is using AI to automate yield studies and property potentials — the topic is still ripe for discussion on how it could be utilized by architects and designers.

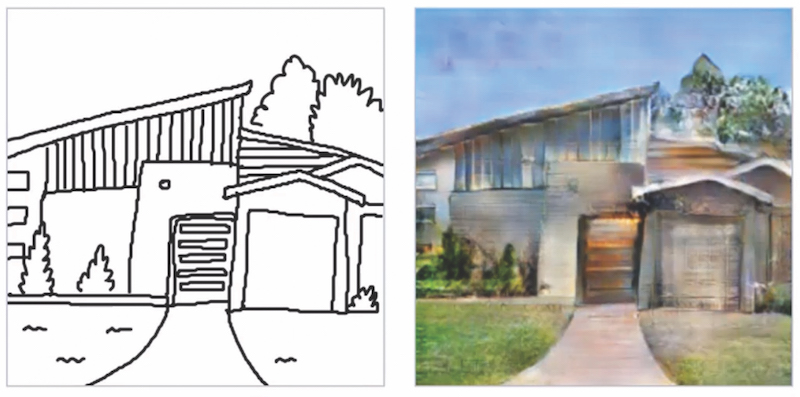

Though AI is a long way from becoming widely adopted in architectural practice in the role that more mainstream software types such as BIM enjoy, researchers at both the corporate and academic levels see the promise of intelligence in design and are exploring it in a variety of scales and applications. One popular model of machine learning for image production and manipulation is known as a generative adversarial network (GAN), which is made of two neural networks that “compete” against one another in order to create imagery, where the “creator” network “creates” images that often begin as arbitrary distributions of pixels and are refined through a second “discriminator” network that uses a provided dataset to learn what types of images it should be looking for. These two networks then take turns looping back and forth, in creation and discrimination, until the discriminator can no longer tell what is a “real,” or provided, image and what is a “fake,” or created, image. HDR’s Data-Driven Design Group, in a relatively simple example, has developed a GAN called “GANHouse,” which can turn a quick hand-sketch into a more realistic-looking and detailed image of a building. The image exhibits a blurriness and unresolved nature that more closely resembles a watercolor than a photorealistic rendering, carrying obvious traces or glitches of an intelligent but unspecific process that makes the application simultaneously intriguing yet limited in its range of uses.

Renowned research units at other notable, large practices, such as Zaha Hadid Architects Computation and Design Group (ZHA|CODE) and Foster + Partners Applied Research and Development (ARD), have been heavily invested in exploring machine learning applications for architectural design, in tasks such as environmental and performance simulation, building optimization, spatial configuration, and visual connectivity. Thus far, however, while they have made important strides, these research projects have tended to be about discrete tasks or design studies rather than more encompassing, intelligently led design processes. A recent paper on the Foster + Partners ARD work on complex spatial planning concludes that:

Research in optimization, classification, sorting, and machine learning more generally are only possible today within those practices and institutions that can allow investment, in terms of time and resources, into computational research. This tends to occur on a centralized level (research centers, universities, and large design consultancy companies), and is much more difficult (and rare) for small-medium design practices, start-ups, and individuals to engage in.

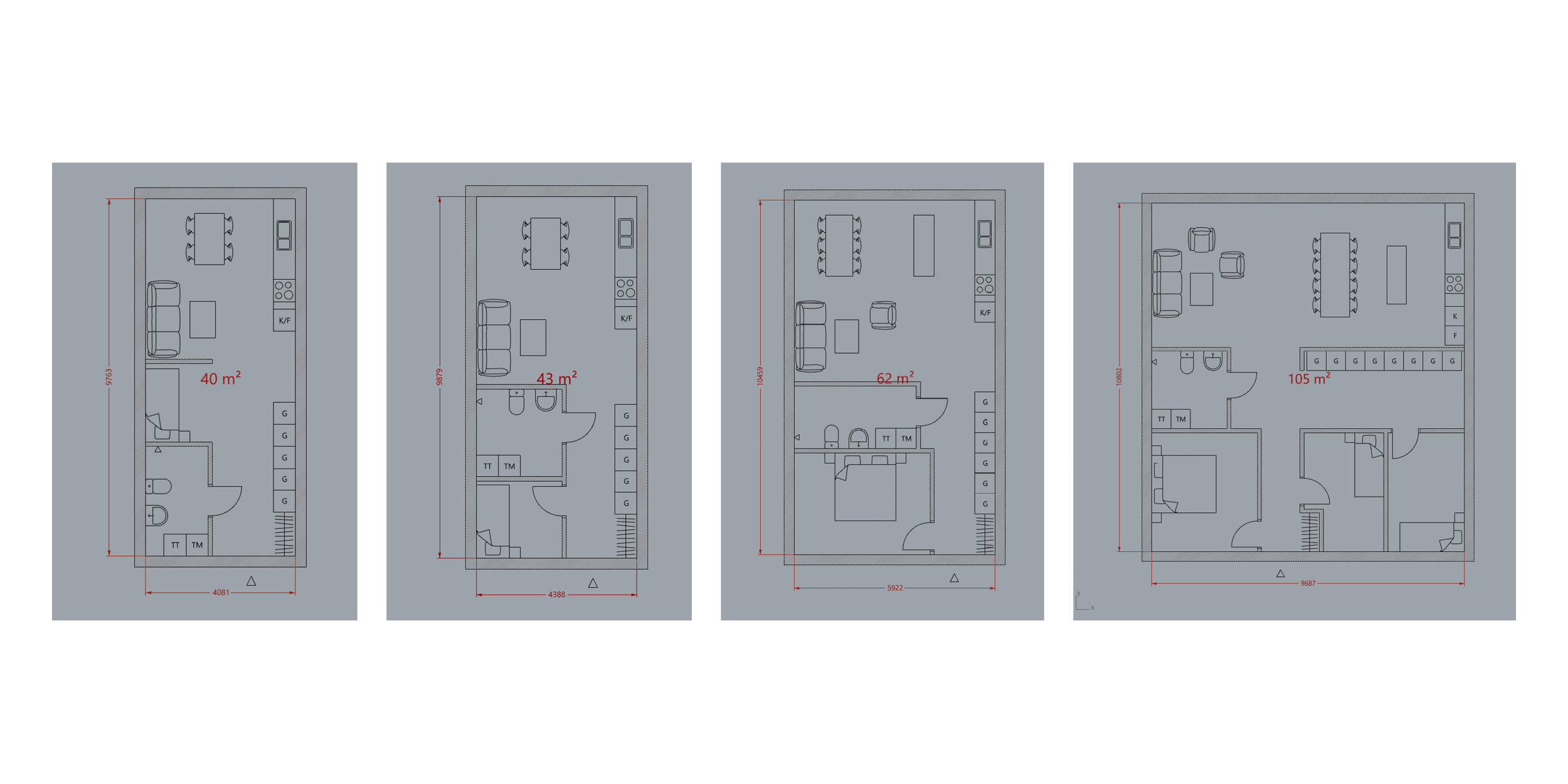

In addition to limited resources and time to invest in AI research, another potential barrier to more widespread research has to do with accessibility and computational expertise: While some architectural designers are familiar with visual scripting plug-ins such as Grasshopper for Rhinoceros, fewer are fluent in more traditional coding languages like Python, in which much AI-for-design is currently developed and tested. Sweden-based Finch 3-D is a tool seeking to bridge that knowledge gap by building a plug-in for Rhino that functions like an extension of existing tools, with no knowledge of Grasshopper (much less of coding itself) required for designing. What is fascinating about Finch is how the intuitive tools and workflows they currently provide span a range from banal to potentially groundbreaking tasks. In some ways, this gives BIM-like functionality to Rhino at an earlier stage of the design process: Stairs are stairs, rather than components of extrusions whose rise and run must be manually calculated; polylines become building massings that adapt to site topography with parametric operability for number of floors, floor-to-floor height, roof types, etc.; and other processes which automate the minutiae of building modeling and speed up iteration time. At its most powerful, though, machine learning is combined with evolutionary solvers in order to determine how to most efficiently divide freeform buildings into desired area sizes, and it creates adaptive floor plans for residential unit interiors based on a bounding outline. This algorithm can determine not only where bedrooms, bathrooms, living rooms, and kitchens should be located, but also whether the kitchen has room for an island, a kitchen table, and a full dining space, or how many bedrooms can be efficiently fitted into the given space. Pamela Nunez Wallgren, co-founder of Finch, sees the future of AI as liberating for designers: “With new software like Finch assisting us with a lot of the technical aspects of the profession, the role of the architect will become more creative and focused on designing great spaces, adding value to the project inhabitants and our cities more widely.“

As of this writing, Finch continues to be developed in beta with the hopes of rolling out more algorithms and tools that can speed up and offer new solutions in the design process.

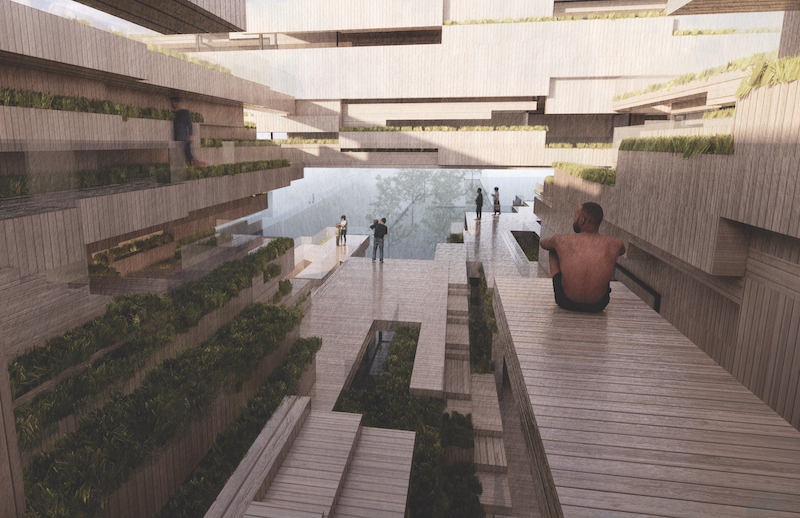

Perhaps the most interesting and promising strides — at least, those that are openly shared and publicly available — are coming through the work of the academic studio project that is investigating and speculating on the application of AI in the design process. At The University of Texas at Austin School of Architecture, Assistant Professor in Architecture Computation Dr. Daniel Koehler is leading a series of design studios, called “Paperclip Studio,” that investigates the use of machine learning and computation on new paradigms of housing and urban form. Koehler sees the promise of AI as offering the ability to completely rethink our modes of living in the city, synchronous with technological developments like urban farming and rapid, automated construction. Throughout history, Koehler says, there has been a divide between urbanism and agriculture where, when we thought “city,” we were thinking about something separated from but dependent upon the production of our food. Urban farming has fundamentally redefined that, allowing us to completely rethink the form of the city. He says:

What comes with automation or digitizing food-production [is that] the whole image of the city [as separate from agriculture] can fundamentally change. What was really successful about [the Paperclip Studio] was what a building looks like when you’re living with food development, when you live with [the urban and the rural] together. What mode of living comes when there’s no hinterland?

The result is a forestlike aggregation of “building parts” that consider totally new architectural super-components and relationships among them — illegible aggregations and a high density of units that share open-air terraces, neighborhood gardens, and community amenities. These aggregations address the question of what the organization of architecture itself — rather than the solutions of engineers and sustainability consultants — can contribute to ecological thinking through intelligent computation. “Of course, [things like] insulation and solar panels on a building are really important, but in the end, those are technical add-ons. What could you contribute as an architect?” Koehler asks. “It is clear AI is interesting because you can render the whole building [itself] as an ecology of building parts — like a forest — with openness and pluralities of entities, autonomous from one another but being able to communicate.” For the Paperclip Studio, the form of these buildings is something of an accelerated Habitat 67 by Moshe Safdie with the idiosyncratic detailing of Carlo Scarpa, created with intelligent computational design. Koehler says “with an artificial network, you search for coherences, new insights, higher variability, or for things you could not think before. [You] can compute or generate ventilation patterns; [you] can create a building assembly in a way that it produces artificial wind flow. [You] can incentivize an ecology of building parts.” For Koehler, artificial intelligence allows designers to create not only new types of buildings, but entirely new ways of thinking about ecologies and what they mean in the Anthropocene, especially when facing building typologies, like urban housing, that have too often been the result of mass-produced solutions. “Why this looks like a forest, partly, is because we [require] every room to have a certain amount of sunlight — very banal at first, but it’s exactly these banalities that are necessary for housing that leads to reductionist designs…. Compare a tree with a mono-surface of a roof: A tree can absorb or buffer more energy because it has 1000 times more surfaces, more envelope. So as a designer, I’m really suspect of saying, ‘We have to limit the form of the building’.”

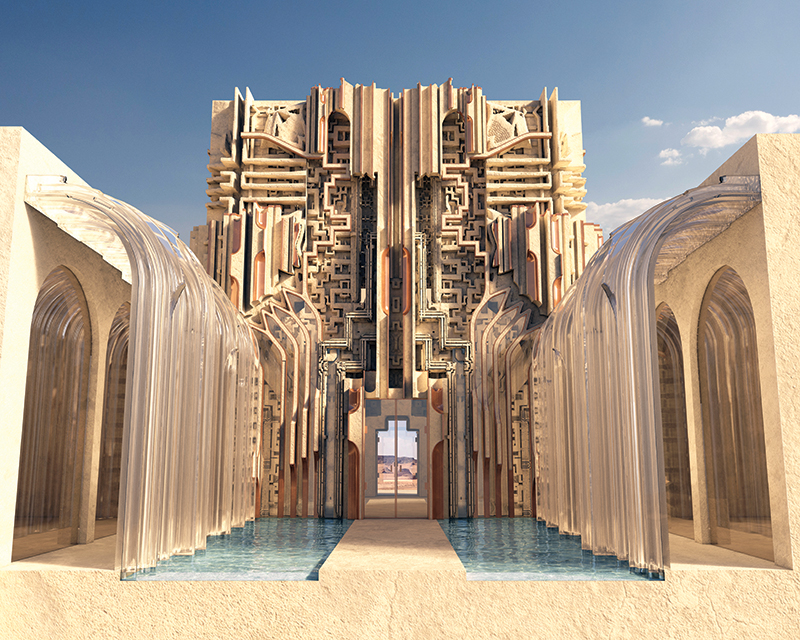

While built projects with clear markers of artificial intelligence baked into the design process are few and far between, a recently shortlisted competition proposal by Mark Foster Gage Architects (MFGA) paints a possibility. MFGA proposed a luxuriously detailed desert resort in the Middle East which was built into the existing rock formations of the site where possible. The design was developed with AI, but not so much for the purpose of technological novelty as for aesthetics: The goal was a solution that wouldn’t oversimplify the source of inspiration. Gage describes their reasoning: “This region has a rich history of using patterns in weaving, jewelry, tile work, etc., so we wanted to tap into that. But we also didn’t want to do the ‘contemporary’ thing, where you just laser-cut historic patterns or abstractions of them into metal panels and use it as a screen.” Gage has been critical in the past of oversimplified diagrams or metaphors in contemporary architecture and sees AI as a potential tool for achieving a level of inherent complexity that is currently too intensive or cumbersome for designers to wrap their heads around. “We wanted to, in a sense, splice the genetics of this pattern material in a way that you couldn’t tell where one [left off] and the other began. The only way we could think to do this was with AI, as humans tend to abstract things when confronted with complexity, and we didn’t want to lose the complexity, the richness, of either [the historic material or our architectural ambitions]. AI is great at patterns.” This pragmatic approach to the technology is consistent with his broader outlook on the application of AI; he says he’s “far more interested in what people do with it than the fact that they used it.”

Gage’s words seem to track with how researchers and computational design experts are viewing the future of intelligence in design, including concerns about the possible downsides. “I’m sure some people will do interesting things with AI in architecture in the future, but I anticipate that most of AI’s influence in architecture will be used for efficiency, building performance, and making buildings even cheaper,” Gage says. AI’s adoption by neoliberal capital in the name of efficiency, bottom line, and the ostracization of labor instead of for an aesthetic goal of a more beautiful world, as Gage sees its potential, is a concern for many. Of course, Gage’s use of the technology in designing a luxuriously gilded, secluded, and secretive Middle Eastern resort raises questions about when architects are participating in late neoliberal capitalism rather than resisting it: This sort of resistance has been a topic of his writings for years.

Galo Canizares, a lecturer at the Texas Tech University College of Architecture and author of “Digital Fabrications: Designer Stories for a Software-Based Planet,” shares the outlook that AI might not currently be a catalyst for architectural revolution. Canizares describes AI as essentially a hyper-advanced version of parametric design software — which architects have already mostly integrated into their workflows — and says it therefore isn’t likely to make massive changes to the industry in the near future. “All of the things we consider world-changing end up being boring and normal eventually, like plastic or voice recognition…. AI will revolutionize how we fight wars before it changes how we build houses.”

Still, it is a fact that technological upheavals have always changed how architects design and propose new worlds. AI is going to disrupt, automate, and streamline countless industries (and jobs) in the coming decades. Whether architecture itself is created by artificially intelligent machines in lieu of the traditional “master-builder” architect certainly remains to be seen, but architecture will be dealing with the way automation and intelligence change the city and our built environments, regardless. Koehler explains the necessity for architecture to address intelligence because of its ramifications on climate change and the potential for non-human solutions: “When you really accept there’s a limit to human thinking and how you address problems as a human — because, in the end, it was [modernist] humanism that brought this ecological mess — what if you [let] data generation reflect back into your process and let the machine offer [new solutions]? … There’s a critical role of research in architecture through design. Who, if not architects, are proposing new alternatives?” In other words, the world (and the technology that creates it) continues to evolve; if architects — those of us who have been trained to imagine the near future — don’t adapt and lead in projecting a vision of the possibilities for better in that world, who will? Will we leave it to Big Tech executives and venture capitalists who see dollar signs in disruption (especially in the relative dinosaur that is the AEC industry)? Or could we actually use intelligent design to promote an evolution of something better?

Davis Richardson is a designer with Perkins&Will in Austin and founder of DROOOPI.

Also from this issue